FOMO Filter for AI News in Detail

An experiment in weekly AI briefings revealed the obvious: the code wasn't the problem. The model was.

When I wrote about building a filter for the AI news flood, the weekly briefing agent wasn't a thought experiment. I'd been running one for myself already. Four hours to build, a few cents a month to run. Every Sunday evening it lands on my phone. Four questions, clean layout, filtered through my actual work.

I've been using it for months now. Long enough to know what works, what broke, and what I had to rewrite three times before the output stopped being useless.

This isn't a step-by-step tutorial. If you want one, you can reverse-engineer mine. What's more interesting: the things that almost killed the project before it started being useful.

Why I didn't use n8n

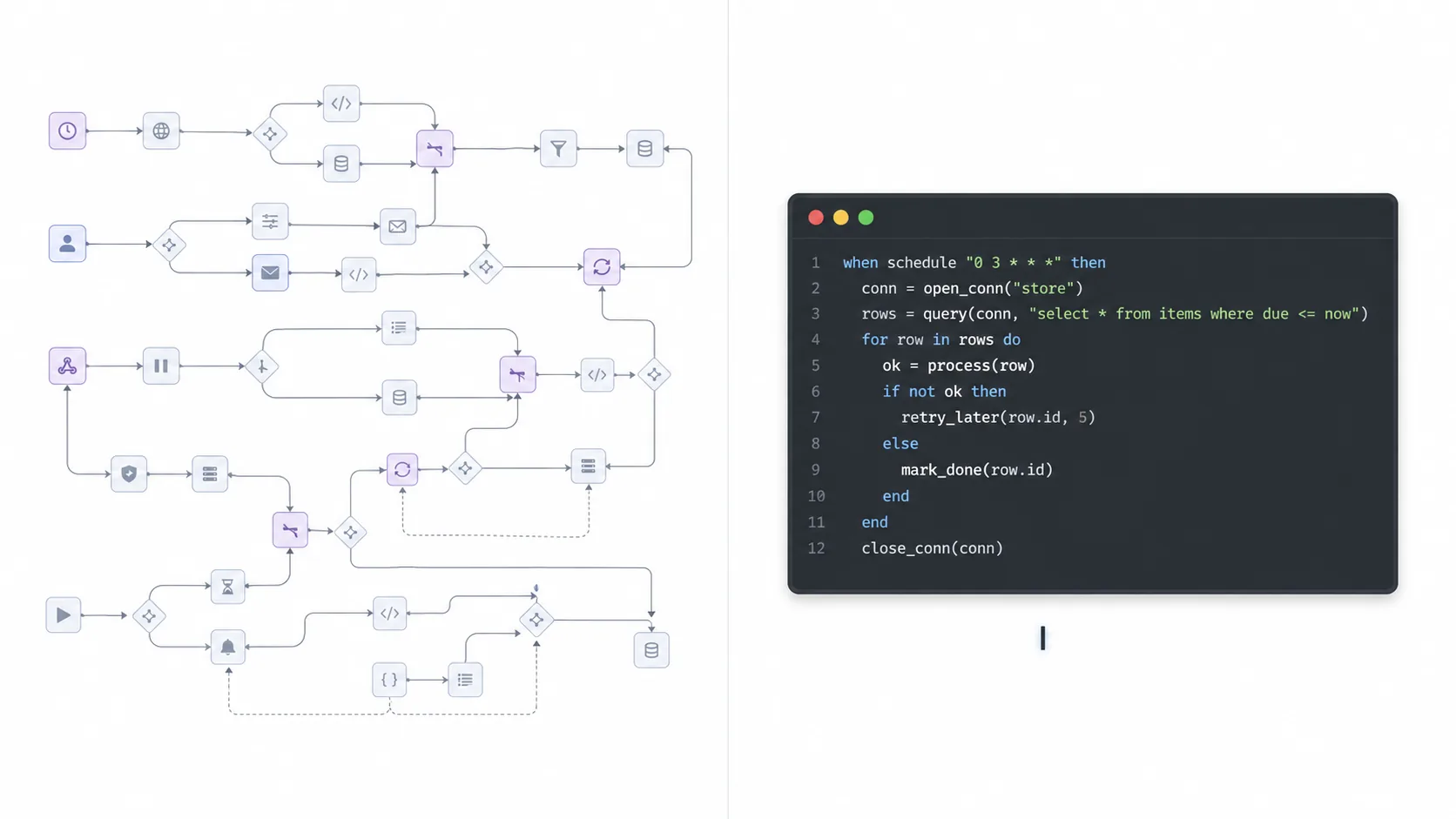

In the original post I suggested n8n. Solid tool, visual workflows, free tier. I tried it. It works. But for this specific thing I went simpler.

One serverless function. One API call to Grok via xAI's Agent Tools. One push notification. That's it.

The workflow doesn't need branching, retries, conditional logic, or a dashboard. It's: fetch, filter, push. Cron trigger, 90 seconds of work, done. I deployed it on a Cloudflare Worker, but any serverless platform with a free tier would do the job. Ten kilobytes of code. Editing the prompt means editing one markdown file and redeploying in three seconds. No clicking through dialogs, no subscription, no separate account to manage.

The lesson: pick the tool that fits the shape of the problem. A weekly briefing agent isn't really a workflow. It's a script that runs on a timer. Treat it as such.

The hardest part is not the code

This surprised me. I expected the integration work to be the tricky bit. API authentication, push notification plumbing, the deployment pipeline. All of that was done in one afternoon.

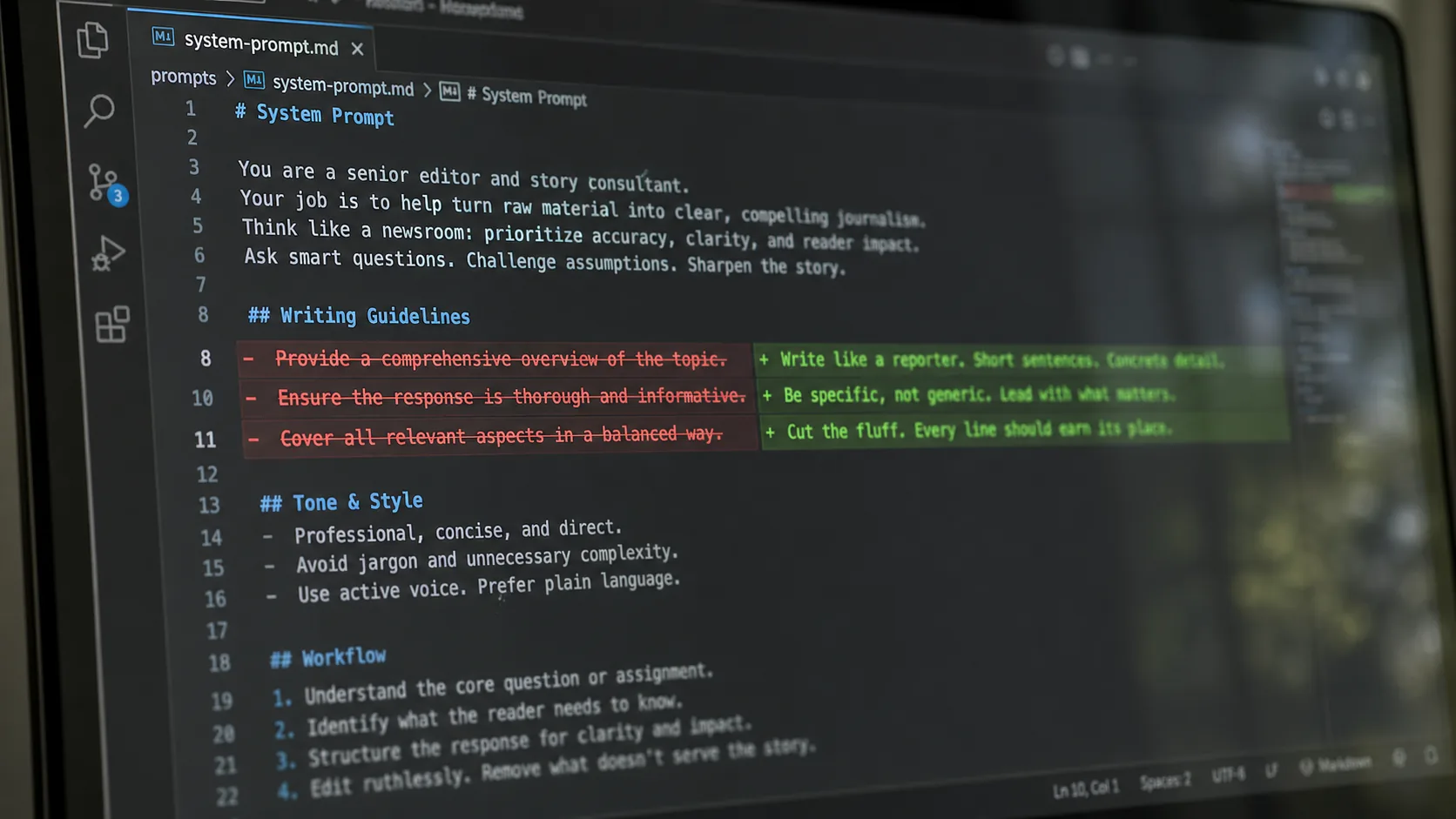

The hard part was the prompt. And it kept being hard for four iterations.

The model wanted to impress me. Early runs came back with polished paragraphs about how revolutionary each release was. I had to explicitly say: write like a reporter on deadline. Short sentences. No marketing speak. You can be critical. The tone flipped from hype to signal the moment I gave that instruction.

The abstraction level was wrong. The first working version gave me product updates. "Cloudflare launched AI Search with Storage." Technically correct, completely useless. What I wanted was paradigm-level: "Cloudflare is shifting from stateless compute to agent-native infrastructure." Same underlying news, different altitude. Three prompt rewrites before the model understood the difference.

It filled sections just to look complete. If there wasn't a real release in the "new category" slot, the model would stretch something marginal to fit. I had to explicitly allow it to leave sections empty. "Nothing worth reporting this week" is a valid output. Most weeks, at least one section will be empty. That's the filter working.

It hallucinated. Twice. Invented URLs, fake paper IDs. Both times because I'd over-constrained the filter. When the criteria were too narrow and real matches were thin, the model would rather fabricate than return nothing. The fix wasn't "don't hallucinate" — models ignore that instruction. The fix was loosening the constraint and stating the preference explicitly: two real items beat three with one invented.

The interesting work in these systems is almost never the code. It's the prompt. And the prompt only gets good through iteration against real output.

What I actually get now

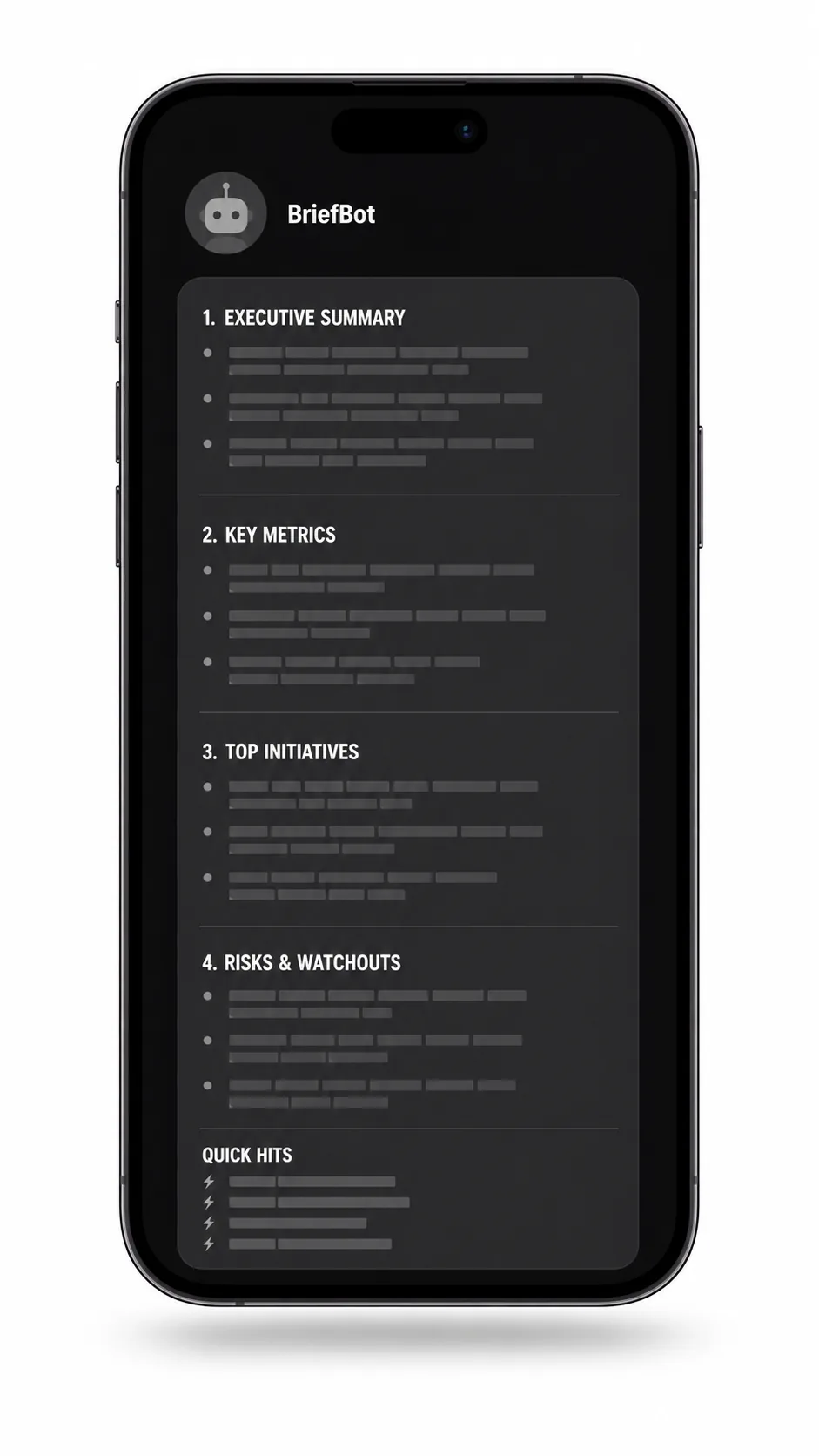

A message on my phone, every Sunday, with this shape:

- What actually improved my current work? Things that plug into my existing stack today.

- What enables something new? Capability shifts, not product launches.

- What is overhyped this week? Named explicitly, so I stop seeing the same tweets five times.

- What will I test for 30 minutes? One concrete action, with a link.

Plus a handful of one-liners under "Quick Hits" and a single footnote for anything outside the window that's still leading its field.

The delivery channel matters. Pick wherever you actually open messages without thinking about it.

No Twitter. No newsletters. Ten minutes to read, maybe one link opened, then back to work.

What it costs

Almost nothing. That surprised me too.

The serverless function runs on the free tier — 100,000 requests per day included, I use four per month. LINE's Messaging API has a free monthly quota, and a single push to yourself stays well within it. The main cost is the model call to Grok, plus a small slice for the tool invocations — the search queries the model fires to scan the web.

Per run, with tools firing eight or so search queries:

- Flagship reasoning (

grok-4.20-reasoning): about 50,000 tokens in, 9,000 out. Roughly 16 cents per run, so about 60 cents a month on a weekly cadence, or around $4.80 daily. - Fast reasoning (

grok-4-1-fast-reasoning): about 12,000 in, 4,000 out. Less than half a cent per run. Roughly 2 cents a month weekly, or 14 cents daily.

I ran both side by side for a few weeks. The fast tier produces slightly thinner output — shorter meta section, sometimes one item fewer. But the structure holds. The filter still works. Part of why: Grok searches X via its built-in tools, and the source material there is already dense. Posts are short, opinionated, and compressed by the people who wrote them. The model isn't digging through long-form articles. That makes fast reasoning good enough for most runs. I stopped caring about the difference and stayed on fast. The flagship's extra depth isn't worth 36x the cost for a weekly briefing.

Weekly updates for 2 cents a month. Or daily for an entire month at 14 cents. No newspaper comes close.

What changed for me

Two things.

I stopped opening Twitter on purpose. This wasn't a discipline move. I genuinely don't feel the pull anymore, because I know the filter will catch anything that matters. The FOMO is gone because the delivery is guaranteed.

And I started testing more. Filter 2 from the original post — the 30-minute reality check — works much better when you already have a candidate lined up. You don't browse for tools to try. You get told what to try. The friction drops to near zero, and that's when the habit sticks.

If you want to build your own

A few things that might save you time:

- Don't over-engineer the pipeline. One function on a free-tier serverless platform is enough. You don't need a database, a queue, a dashboard. Cron, API, push.

- Pick a delivery channel you actually check. Not the one that seems professional. The one where you open messages reflexively.

- Iterate the prompt against real output. Save every run to a file, diff them across iterations. The prompt will look terrible for the first three tries. By iteration five you'll have something you trust.

- Let sections be empty. This is the single biggest quality signal. If the system never reports "nothing this week" somewhere, your filter is broken — it's padding.

- Start daily, then move to weekly. Daily gives you seven data points in seven days instead of one. Iterate fast on the prompt against real data, then settle into a weekly cadence.

- Use a model with search built in. The heavy lifting — scanning the web, finding primary sources — should be done by the model. Grok's Agent Tools, OpenAI's Responses API with web search, whatever you prefer. Let the model do its job instead of plumbing RSS feeds yourself.

That's the whole thing. A tiny piece of software, a carefully tuned prompt, one notification a week.

The releases haven't slowed down. My attention to them has. And that was the point.