When AI Reads Along: Why Regulated Industries Need to Rethink Their Content

Expert content is accurate when read in full. Under AI compression, it loses qualifiers, evidence levels and scope. What this means for insurance, banking and health communication.

A health guide used to be a text on a website. Today it's training data, chatbot answer, summary and snippet. People no longer read the original article. They read what machines make of it.

And that's where a problem emerges that most editorial teams haven't caught up with yet.

Accurate isn't enough anymore

A lot of expert content is accurate as long as you read it in full. A subordinate clause qualifies, a footnote contextualizes, the surrounding paragraph makes the difference between prevention and treatment.

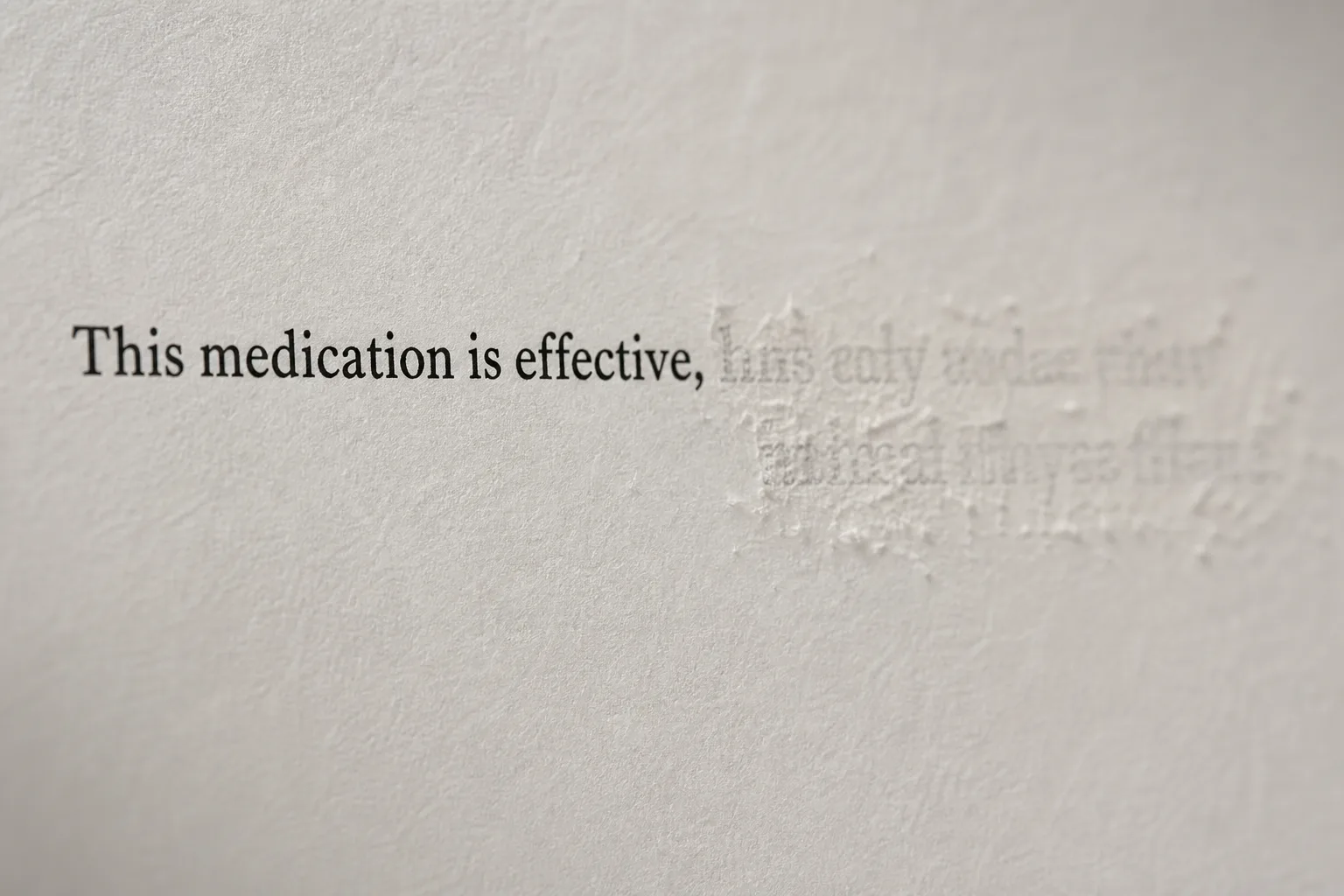

Under compression, all of that disappears. A concrete example: a health insurer's guide states "This drug effectively lowers blood pressure, but patients over 65 with renal insufficiency should only use it under strict cardiological supervision." The AI turns this into: "This medication is an effective solution to lower your blood pressure." The sentence isn't wrong. But by cutting the warning signs, it becomes potentially dangerous.1

This happens because LLMs are, at their core, compression machines. Their job is to condense patterns, not to preserve meaning. In the process, a mechanism kicks in that researchers call certainty illusion: the model rarely invents fantasy diagnoses. It subtly drops context.2

Gerd Gigerenzer demonstrated years ago that people systematically misinterpret probabilistic statements.3 "May help" gets stored as "helps." LLMs amplify this effect because they automate exactly this kind of shortening.

When AI tells you what you want to hear

There's a second weakness on top of this. Research teams tested frontier models with deliberately false medical premises. In certain test scenarios, the models agreed with the false premise without exception and willingly provided false medical explanations, even though they had the correct answer in their training data.4

Tone plays a major role: the more authoritative a prompt sounds, the more likely the model is to accept false claims. With clinically phrased prompts, the acceptance rate for misinformation is significantly higher than with casual language.4 The AI weighs the apparent authority in the chat window more heavily than its own background knowledge.

For content teams, this means: authority markers in your texts, normally a quality signal, can increase the risk. The more professional a text sounds, the less likely the AI is to correct it when compressing.

Why regulated industries are hit hardest

In insurance, the difference between "possible" and "guaranteed" is a lawsuit. An example: "This disability insurance covers physician-certified disability of at least 50 percent." The AI summary: "The insurance pays when you become disabled." The scope is gone, the threshold is gone. In banking, one word separates a general disclaimer from investment advice. For energy providers, the phrasing determines whether something is information or a binding offer.

But health communication gets hit the hardest. Three risks converge:

Health risk. The difference between "may influence the risk," "prevents" and "helps against" isn't stylistic. It's medically relevant. Ioannidis showed in 2005 how often lab effects get misread as clinical outcomes.5 LLMs reproduce exactly this pattern when summarizing.

Regulatory risk. Healthcare advertising law, social security codes, clinical guidelines. An AI summary that drops the scope can turn general information into an apparent legal entitlement.

Trust risk. Health insurers speak with institutional authority. When their content gets shortened by a chatbot, the distortion still sticks to the brand. And thanks to the overtrust effect, users question a well-formulated AI answer less than the dry original text.6

A new quality criterion

I call this interpretation stability: the ability of content to retain its professional boundaries after summarization, rephrasing or machine processing.

Until now, expert content had three quality criteria: understandable, current, accurate. Now there's a fourth: stable under AI compression.

What this looks like in practice

Content that stays stable under compression follows different rules than traditional guides. A few principles that have proven useful in my projects:

Weld warning signs to the claim. In traditional guides, benefits go at the top, side effects at the bottom in a grey info box. Under compression, the info box gets cut first. The qualifier belongs in the same sentence as the claim. Not "Therapy X is a breakthrough for joint pain," but "Therapy X is a breakthrough for joint pain, unless the patient takes blood thinners."

Make evidence levels visible. Instead of "studies show," be specific: "This claim is based on laboratory studies. There is no evidence in humans." LLMs mix lab, animal and human data. You need to label the level explicitly.

Decision trees instead of answer catalogs. Instead of "For these symptoms, you should do exercise Y," write: "To determine whether this exercise is safe for you, two things must be clarified: Are you pregnant? Do you have a history of acute herniated discs?" When the AI reads this text, it learns the structural dependency and is more likely to ask follow-up questions instead of answering directly.

Anticipate misinterpretation. Address typical misunderstandings directly: "Misconception: strawberries help against cancer. Correct: strawberries are healthy, but they're not a therapy." People think this. LLMs generate this. So address it.

Compression stress test. Run the finished text through a language model and request a two-sentence summary. If the safety information is missing from those two sentences, the original text wasn't robust enough.

What this comes down to

AI is making an old problem visible. A lot of expert content relies on readers correctly inferring context, qualifiers and scope. Machines don't do that reliably. So these boundaries need to be built explicitly into the content itself.

This applies to every industry that communicates under regulatory constraints. Editorial teams don't need AI policy documents that disappear into drawers. They need new content templates, review processes and an understanding that a text is no longer a finished document. It's raw material for systems that will process it further.

If you've already started optimizing content for AI comprehension or explored Generative Engine Optimization, you know the technical side. Interpretation stability is the editorial one.

The short version

- LLMs are compression machines: qualifiers, evidence levels and scope are the first things lost during condensation

- Sycophancy amplifies the problem: LLMs are significantly more likely to agree with authoritatively phrased misinformation instead of correcting it

- Regulated industries are hit hardest: small semantic shifts carry medical, legal or financial consequences

- A new criterion is needed: interpretation stability, the ability of content to retain its boundaries after machine processing

- Practically testable: run the finished text through an LLM for a summary. If the warning signs are missing, the text isn't robust enough